Weather forecasting informs everyday decisions such as what to wear in the morning or whether the kids have their baseball game this weekend. But, all sorts of businesses also need this information:

- Farmers, to decide when will be the best time to plant/spray/harvest;

- Commodity traders, to inform their trading positions;

- Gas and electric utilities, to understand threatening weather conditions;

- Oil and gas producers, to be aware of potentially hazardous weather events that could affect production;

- Transportation companies, to prepare for potential shipping and logistics disruptions;

- Humanitarian and disaster response (HADR) missions, to pre-stage relief resources and estimate the damage that might occur;

- And military and intelligence agencies, to inform the strategy and tactics of their missions and operations.

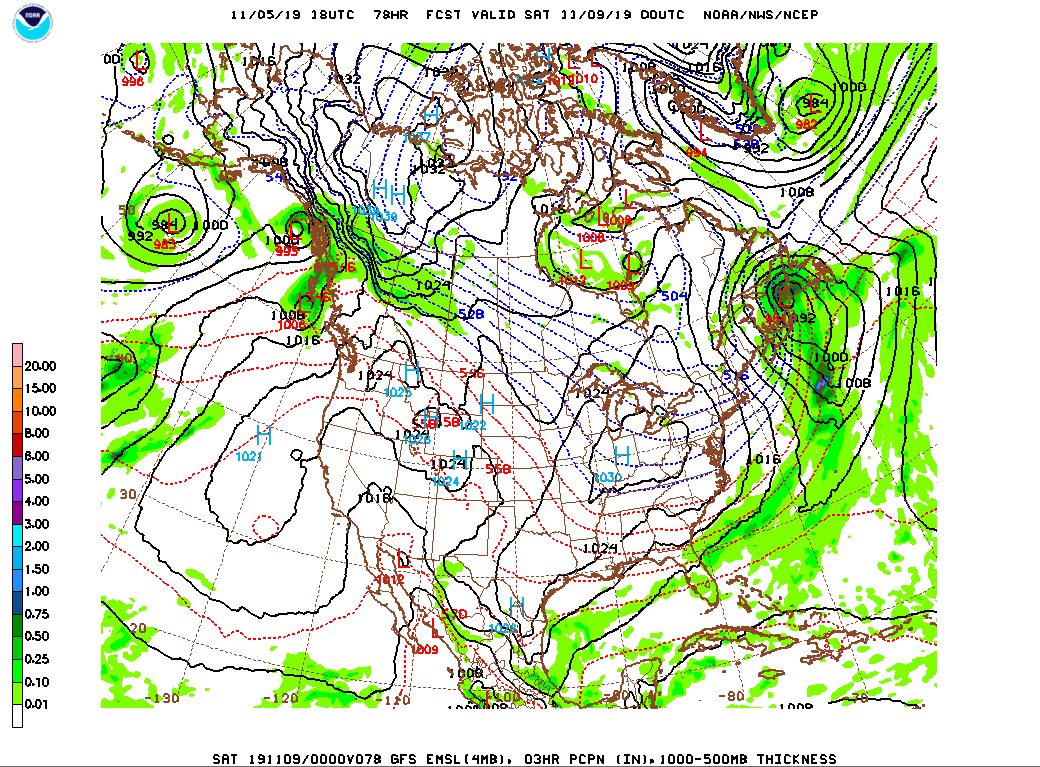

The U.S. National Oceanic and Atmospheric Administration (NOAA) produces a detailed global weather forecast multiple times per day that provides information about what the weather will be like in the upcoming hours, days and even weeks. They create this forecast using a long, complex code base and a world-class supercomputer. On a normal day, it takes NOAA and its National Center for Environmental Prediction (NCEP) division about 100 minutes to run its main numerical weather prediction (NWP) model, called the Finite Volume Cubed-Sphere Global Forecast System (FV3GFS).

NOAA supercomputers are capable of running the massively parallelized code bases required to generate these numerical weather forecasts. For computing power like this, a single machine comes with a price tag in the tens of millions of dollars. There are additional costs for the teams of people focused on maintenance and electricity costs to power and cool the equipment. For more info on NOAA’s supercomputer fleet – check out this article or this one.

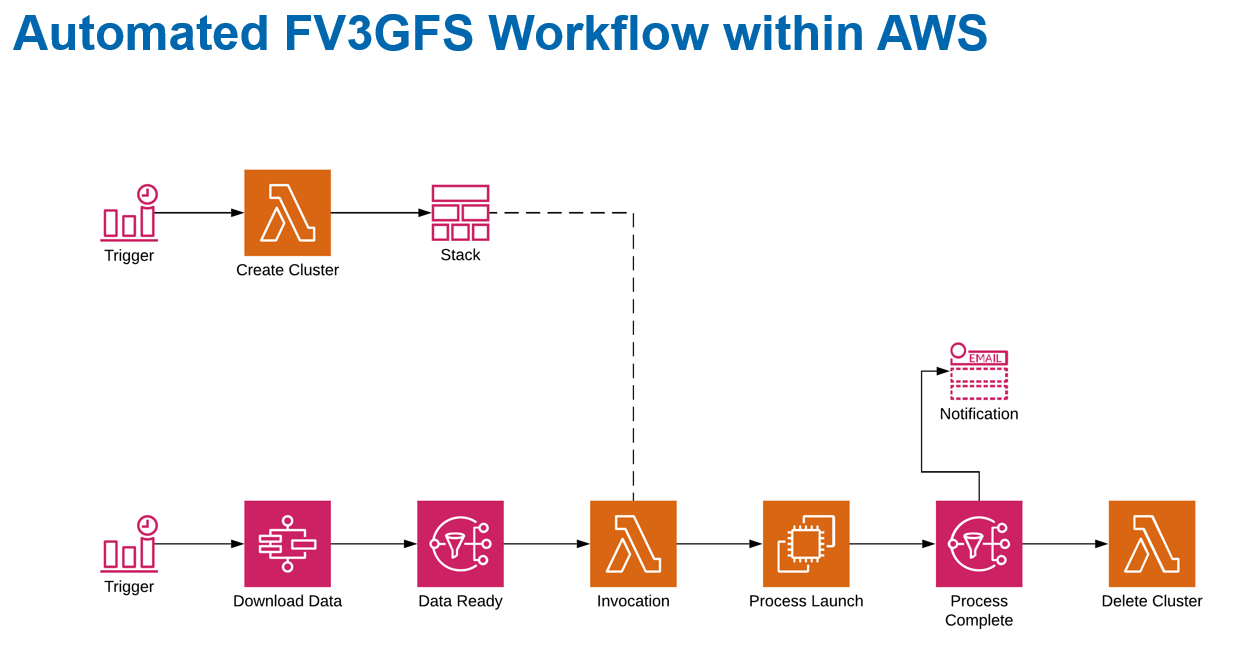

Maxar’s WeatherDesk™ team decided there had to be a better, faster, quicker, and less capital-intense way to generate the forecast. And, this new way had to give us more control on the output – both in terms of performance of forecast skill and speed at which the data was created. We reached out to Amazon Web Services (AWS) to build a cloud-based, high performance computing (HPC) architecture. By combining our teams of data scientists and engineers, Maxar and AWS were able to create an automated workflow that leverages a whole suite of AWS tools and services, as well as allowing Maxar’s physical scientists to dig into the Earth Science side of NWP.

When we started running the model in a small-scale HPC environment, we discovered some challenges in matching the output with what NOAA was providing through its public access points. We figured if we couldn’t match NOAA’s output using the same configuration of their FV3GFS model, then we had something awry in our system. Thanks to some late nights, coffee and a ton of passion and dedication from the Maxar and AWS team, we combed through the FV3GFS source code and the various supporting libraries, as well as system architecture configs, to get the output to match. From there, it was all about scaling, developing the Continuous Integration & Continuous Delivery (CI/CD) workflows, establishing the post-processing and designing the subsequent model configurations that would allow us to not just make a faster forecast quicker (i.e., more cost efficient and less capital intense) but also make better forecasts!

Following that development phase of our program, we established a system that automatically builds itself each time we want to run a configuration of the FV3GFS (the NOAA weather model we set our focus on) and then automatically tears itself down after the run completes. We have a series of health checks, file/data transfer mechanisms and auto-scaling (with healing) instances along with the necessary parallelization and file system structures in place to do this at break-neck speed, but also with resiliency and durability.

This setup allows us to reach a similar forecast to what NOAA’s supercomputer produces – but we get the results a lot faster. Depending on the configuration (again – flexibility thanks to the AWS Cloud) and the various customer requirements, we can cut the computational time in half for the model solution and we have our sights on likely finding another 50% reduction in computation time relative to the NOAA setup. Additionally, the cost to develop and operate this system is significantly below the $500+ million, 10-year program deal NOAA inked a few years ago, as well as the additional tens of millions they’ve allocated in subsequent funding for their fleet of supercomputers. Admittedly, that’s not necessarily a fair comparison given their machines are humming along doing all sorts of computations beyond just the FV3GFS, but we also didn’t need an act of Congress to go from “zero” to “full-production,” and we did it in a matter of weeks (again – kudos to the AWS support team and the Maxar data scientists and engineers who rocked this)!

At the end of the day, by running this weather model in AWS it allowed us to only pay for the HPC we needed, when we needed it. We don’t have to worry ourselves or our engineers on physical compute infrastructure and its maintenance. We don’t have to worry about the electricity, the footprint, the physical (nor even most of the cyber) security – we just get to focus on what our customers want and need while giving our engineers the resources to hit those requirements. For Maxar, this is the way of the future; in many cases, that future is today.

Getting the weather forecast faster and for less expense means those farmers can make better decisions, allowing for more profitable operations and more food and sustenance to feed a quickly growing global population; those traders can make more informed and more profitable positions; the oil and gas producers can better protect their human and capital resources from harm's way; transportation companies can keep their routes and goods on time and with more certainty; and the HADR and military users can know what to expect before the boots hit the ground or the wings take to the skies.

To hear more about the Maxar cloud-based HPC efforts, I’m speaking about the program at the AWS re:Invent 2019 HPC Meet-up this afternoon in Las Vegas. I’ll be sharing some lessons learned about HPC in the cloud, working with AWS Professional Services team and some insight into what’s coming next. I’ll also be speaking during the breakout session AIM227-S: Powering global-scale predictive intelligence using HPC on AWS. That presentation happens on Wednesday, Dec. 4, from 4-5 p.m. PT at the Venetian.

Travis Hartman is a Director within the Analytics Products division at Maxar. Travis supports product design, development and operational awesomeness for Maxar’s Earth Intelligence suite of analytics capabilities.