With DeepCore 1.4, users can take advantage of the powerful, new Open Neural Network Exchange (ONNX) format to more easily and rapidly deploy machine learning and AI models.

There has never been more interest, innovation or investment in Artificial Intelligence (AI) across the U.S. government (USG). On February 11, 2019, the President signed Executive Order 13859, Maintaining American Leadership in Artificial Intelligence [1]. This order launched the American AI Initiative, a concerted effort to promote and protect AI technology and innovation in the United States. Since then, a myriad of inter-agency organizations has released subsequent reports such as:

- The National Science and Technology Council’s Select Committee on AI’s The National Artificial Intelligence Research and Development Strategic Plan [2].

- The Office of the Director of National Intelligence began the AIM Initiative, a strategy for Augmenting Intelligence with Machines (AIM) [3].

- The U.S. Congress mandated National Security Commission on AI recently released an Interim Report with its findings.

These documents outline the need for the United States and its allies to harness the potential of AI while addressing a broad range of important themes -- from ensuring the ethical application, security and transparency of AI, to protecting against adversarial AI. There is also a sense of urgency to ensure the United States develops and maintains its advantage against global threats by taking a whole-of-nation approach that brings together government, industry, academia and foreign allies. We are seeing many great examples of the U.S. facilitating these partnerships through data sharing, collaborative algorithm research and supporting new AI/ML standards.

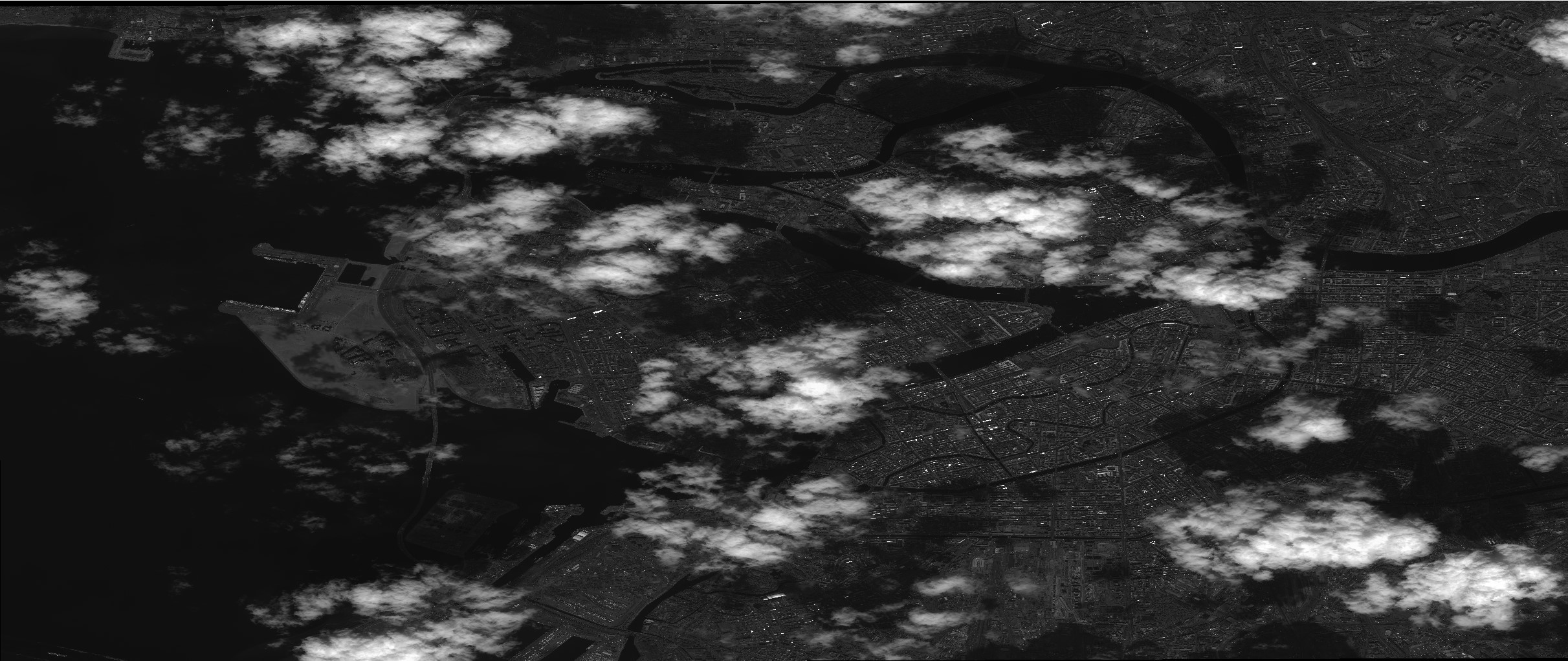

As a leading provider of Earth Intelligence and Space Infrastructure, Maxar is applying AI/ML to help our customers better understand and navigate our changing planet. In 2015, we began actively exploring applications of computer vision to accelerate the analysis of satellite imagery with the vision of building algorithms that could characterize the millions of square kilometers of new data we collect each day. This is not only a challenging data science problem given the wide variety of fixed and moving objects you can discover from 30 centimeter resolution imagery, but it is also a challenging data engineering problem given a single satellite image strip can be nearly 600 square kilometers.

Maxar built the DeepCore suite of tools to enable users to quickly develop and deploy effective AI/ML models to derive insights from geospatial imagery using computer vision. We leverage DeepCore to support a variety of projects and have made the SDK available for free via an Apache 2.0 license. Through its innovative inference engine, DeepCore uses machine learning models to detect several different types of objects in geospatial imagery, including aircraft, rail lines and vehicles.

Now, Maxar’s DeepCore is taking a significant step forward in flexibility by integrating the ONNX format, an open standard created by a consortium of leading AI and ML companies, including Maxar [4]. ONNX allows framework interoperability so models trained in one neural network framework can be transformed into another framework for inference. This means that DeepCore can now use models developed and trained by a wide range of external organizations, strongly supporting the inter-agency initiatives aimed at increasing broad adoption of AI/ML models.

Why ONNX?

Organizations including Facebook, Microsoft, Amazon Web Services (AWS), NVIDIA and others started supporting ONNX to foster portability of neural network models. For the last few years deep learning algorithms were built and trained in frameworks with libraries that made deployment in production systems a challenge. TensorFlow, PyTorch, Caffe, and other machine learning libraries have been widely supported by strong open source communities. However, this required systems like DeepCore to support each framework natively. With the release of DeepCore 1.4, some of this challenge is handled by ONNX support based on ONNX 1.5 and the v10 ONNX operator set.

ONNX support in DeepCore expands the universe of models able to run in DeepCore. If you have an ONNX model, particularly those around object detection or segmentation, we’d love to hear more from you: http://www.deepcore.io/#contact.

[2] https://www.nitrd.gov/pubs/National-AI-RD-Strategy-2019.pdf

[2] https://www.dni.gov/files/ODNI/documents/AIM-Strategy.pdf

[4] https://onnx.ai/